A quick refresher…

QUALITATIVE RISK ANALYSIS

Qualitative analysis, which we have utilized to this point, is usually subjective and ultimately relies on the judgment of individuals.

It is the examination, analysis, and interpretation of observations to discover underlying meanings and patterns of relationships.

This is why experts and their experience might give us confidence in their qualitative findings.

Even so, two or more experts may look at the same information and reach two different conclusions.

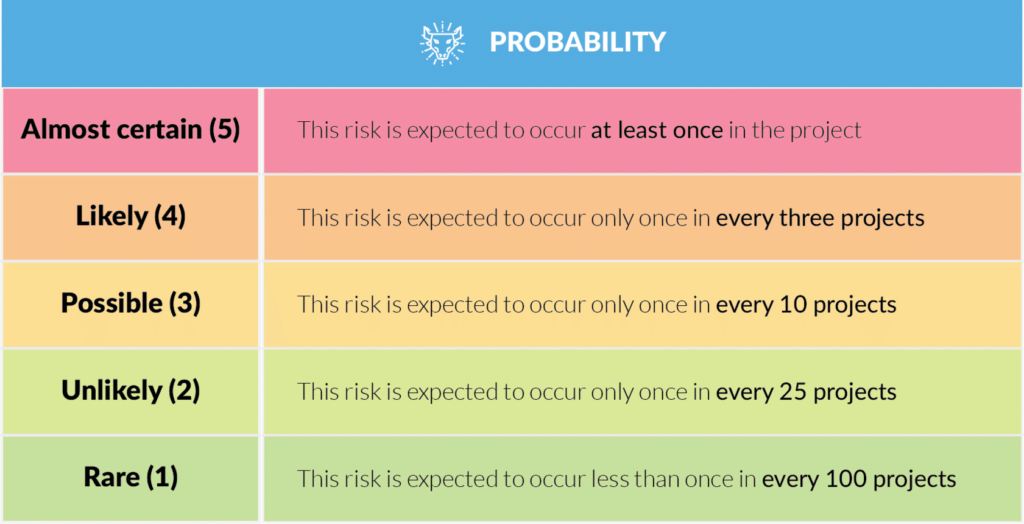

In the narrow context of project risk analysis, this means that different stakeholders may have unique perceptions of what ‘high probability’ and ‘high impact’ are, for example.

You could argue that this is a risk in itself, which is why one response to that is to develop a standard set of risk definitions through the risk management plan.

The essential difference between qualitative and quantitative risk analysis is that qualitative analysis allows you to compare risks relative to each other (this is our risk ranking, the main output of the process). In contrast, quantitative analysis assigns hard, empirical values to assets, expected losses, and the cost of controls.

QUANTITATIVE RISK ANALYSIS

Quantitative analysis, on the other hand, is research using information dealing with numbers and anything precisely measurable.

The most common method is statistical analysis.

You would think this is more reliable; however, the garbage-in-garbage-out rule applies.

Quantitative findings are only as good as the reliability and relevance of the data.

Right up to the choices of statistical methods used, subjectivity can still significantly color the outcomes.

What is it they say about lies, damn lies and statistics?

The essential difference between qualitative and quantitative risk analysis is that qualitative analysis allows you to compare risks relative to each other (this is our risk ranking, the main output of the process); whereas quantitative analysis assigns hard, empirical values to assets, expected losses, and the cost of controls.

Statistical methods

You might be surprised to learn that you have already covered a couple of quantitative methods of analyzing risk.

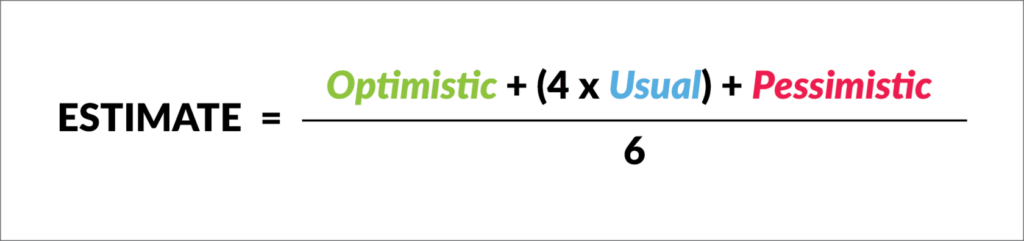

A three-point estimate, for example, is a quantitative method to account for the best and worst-case risk scenarios when making an estimate.

Similarly, we used the expected monetary value technique in the project initiation stage to analyze the aggregate risk of different scenarios.

We will revisit this in the next topic on contingency planning.

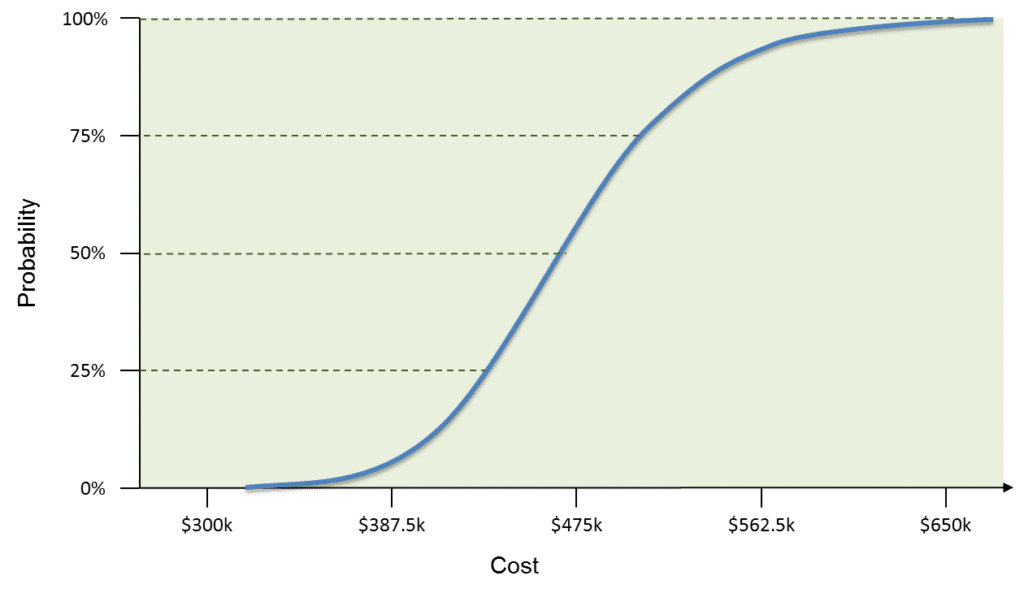

A related simulation technique, Monte Carlo analysis, uses a model that translates the specified uncertainties of the project into their potential impact on project objectives.

Monte Carlo problem-solving is used to approximate the probability of certain outcomes by running thousands, or hundreds of thousands, of trial runs, called iterations, using random variables.

It lets you see, in a probability distribution, all the possible outcomes of your decisions and assess the impact of risk, allowing for better decision-making under uncertainty.

For a cost risk analysis, a Monte Carlo simulation would use cost estimates.

The output from a cost–risk simulation is shown here.

Similar curves can be developed for schedule outcomes using the schedule network diagram and duration estimates.

You are not expected to be able to perform Monte Carlo simulations to pass this course; it is shown here as an example of one of the more sophisticated quantitative methods that are available to you.

Meta-risk

The main problem in using quantitative methods to analyze project risk is that, in many cases, there is insufficient empirical data to run the statistical models.

This is especially true in projects, given that many are inherently unique or unusual undertakings for an organization.

Similarly, the more complex our quantitative model, the more opaque our analysis becomes.

In other words, the more difficult it is for the average stakeholder to understand how we arrived at our conclusions.

Quite often, some or all of the model inputs are subject to sources of uncertainty, including measurement errors, absence of information, and poor or partial understanding of the driving forces and mechanisms.

This uncertainty limits our confidence in the response or output of the model – an uncertainty that is itself a risk we should analyze and respond to!

For that reason, we express no preference nor make any recommendation as to which method is best.

Nonetheless, if the intention of project risk management is to anticipate and control uncertainty, then one of the challenges is to find the right mix – in the context of the work to be performed – of diagnostic and analytic tools.